Welcome to HCI TECH LAB!

Pioneering Embodied and Physical Human–AI Interaction

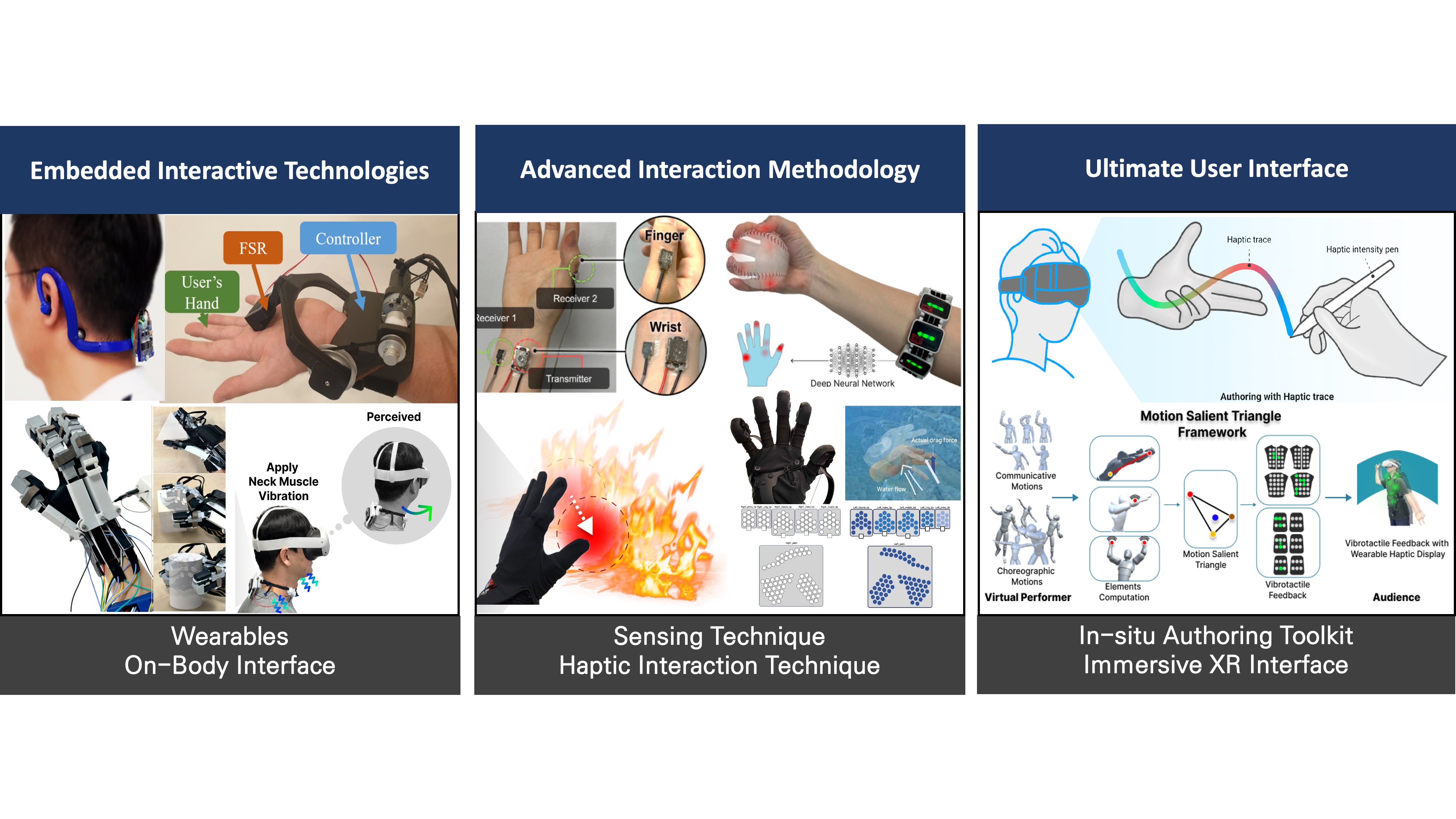

The Human-Centered Interactive Technologies Lab (HCI Tech Lab) is a multidisciplinary research group at KAIST. Our mission is to empower human potential by bridging the physical and digital worlds through embodied intelligence (Physical AI) and immersive technologies (XR).

- Advanced Sensing Technology

- Multimodal Haptic Technology

- Authoring User Experience

News

Latest news from HCI Tech LabAll News ↗

Lab Activity

May 15 2026

Great time with the lab celebrating Teacher’s Day together. Always thankful for the amazing students!

Summer 2026 Undergraduate Research Internship

May 4 2026

We are looking for research interns (including URP) for 2026 Summer. Application deadline is May 15th. You can find more information here.

News

Google Student Researcher Internship

Apr 24 2026

News

CHI 2026 Participation

Apr 23 2026

Publication

A paper accepted to ToH

Apr 16 2026

Our paper VibGrasp: Spatiotemporal Vibration Based Multimodal Haptic Rendering with a Lightweight Exo-Glove for 3D Shape Perception led by Hojeong is accepted to IEEE Transactions on Haptics (ToH).

News

Lab Activity (KAIST Strawberry Party)

Apr 3 2026

Lab Gathering at the KAIST Strawberry Party.

News

Visit from Google AR & VR

Mar 22 2026

News

VR 2026 Participation

Mar 22 2026

Research

Highlighted & Recent Publications from HCI Tech Lab

Selected Publication

All Publication ↗Recent Publication

All Publication ↗VibGrasp: Spatiotemporal Vibration Based Multimodal Haptic Rendering with a Lightweight Exo-Glove for 3D Shape Perception

IEEE Transactions on Haptics

Align-to-Scale: Mode Switching Technique for Unimanual 3D Object Manipulation with Gaze-Hand-Object Alignment in Extended Reality

Proceedings of the ACM on Computer Graphics and Interactive Techniques (ETRA26)

HOICraft: In-Situ VLM-based Authoring Tool for Part-Level Hand-Object Interaction Design in VR

CHI2026

Finger Tendon Vibration: Finger Movement Illusions for Immersive Virtual Object Interaction

CHI2026